Recent Files, Jump Lists, and Application-Level Context

This series is deliberately slow. It’s trying to build an instinct, not a checklist.

In the first article, we framed Windows artefacts as partial, contextual evidence rather than deterministic indicators. In the ShellBags post, we applied that framing to a common mistake: treating shell navigation as proof of file access or intent. ShellBags are a record of what File Explorer remembers about where a user navigated and how those folders were rendered. That’s valuable. It’s also easy to over-interpret.

This post stays in the same lane, but it shifts layers. Instead of asking where did the user navigate, we ask what did applications remember as recent context.

Recent files and Jump Lists are often used as shorthand for document access. They show up in insider investigations, data staging cases, and disputes about who opened what. They’re also one of the fastest ways an investigation can become sloppy. The reasoning error is consistent: an analyst sees a file in a “recent” list and leaps to the user opened and read it. That leap skips over several steps that matter: how the entry was created, what the application considers “use”, and whether the user’s interaction was deliberate.

The goal here is to make those steps explicit. Recent artefacts can be strong evidence of reference and interaction. They can also be evidence of background behaviour and convenience features. They’re rarely proof of comprehension or intent.

From Shell-Mediated Behaviour to Application-Mediated Context

Shell-level artefacts and application-level artefacts record different problems.

The shell exists to help users navigate a namespace. It tracks folders, views, and interaction patterns that make exploration faster. ShellBags sit in that world. They tell you that a folder became part of a user’s known environment. They’re often created through Explorer windows, but they can also be created through common dialogs and other shell mechanics. That’s part of why they’re easy to misread.

Application-level recency is narrower, and sometimes deeper. Applications need quick ways to return users to what they were doing. They need to surface “recent” files, “pinned” items, and frequent locations. Windows also provides a set of plumbing to make this consistent across the platform. Jump Lists and recent items are the result.

This distinction matters because it changes the implied action. A ShellBag can exist because a folder was browsed, previewed in a dialog, or touched as a side effect of another task. A Jump List entry often implies an application had a relationship with a file, but that relationship can include more than deliberate opening. It can include creating a new file, saving a copy, re-opening a previous session, or touching a file for metadata.

If you treat both layers as if they mean the user opened the document, you’ll overstate your conclusions. If you treat both layers as if they mean nothing reliable, you’ll miss valuable context. The middle ground is where good analysis lives.

What “Recent” Means in Windows, in Practice

“Recent” isn’t a single feature. It’s a set of behaviours across the shell, Windows-provided APIs, and application-specific logic.

At a high level, Windows records recent file context through a few mechanisms that commonly appear in DFIR work.

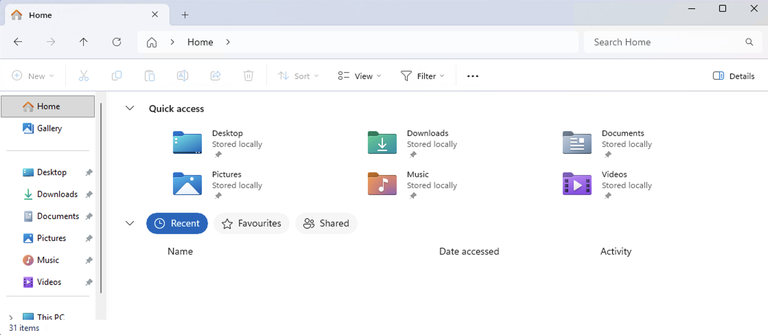

One is the user’s Recent Items shortcuts

Windows creates shortcut entries that point to files and sometimes folders that were recently referenced. These shortcuts carry useful metadata about the target, including path information and the shortcut’s own timestamps.

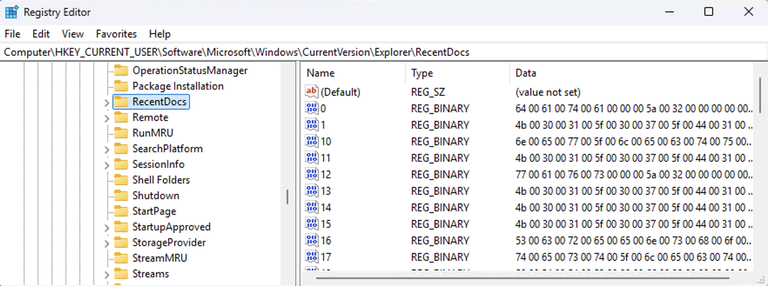

Another is the RecentDocs MRU lists in the user’s registry hive, located at HKEY_CURRENT_USER\Software\Microsoft\Windows\CurrentVersion\Explorer\RecentDocs:

These are lists of recently referenced file names, organised in a way that supports the shell’s own “recent documents” features.

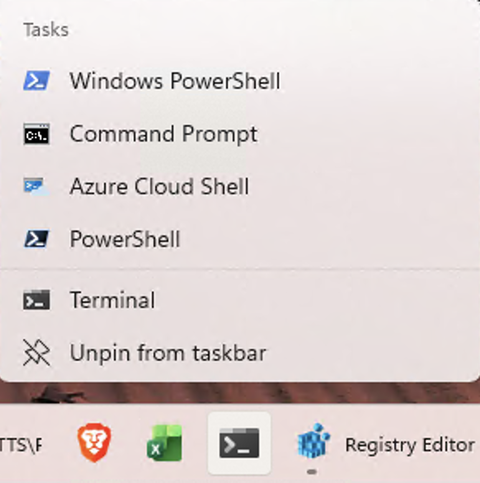

Then there are Jump Lists, which are per-application lists shown in the taskbar and Start menu:

Jump Lists come in two broad forms: automatic list (%APPDATA%\Microsoft\Windows\Recent\AutomaticDestinations) that Windows maintains based on application usage, and custom lists(%APPDATA%\Roaming\Microsoft\Windows\Recent) that applications build to represent pinned items or app-defined categories.

The useful way to think of these isn’t which artefact is better, it’s what triggers each one, and what does it mean when it changes.

Recent artefacts exist to reduce friction for legitimate work. They’re designed for convenience, not evidence. That doesn’t make them useless. It means they reflect the system’s idea of useful context, which can diverge from an investigator’s idea of user intent.

Behavioural Triggers: How Entries Get Created and Updated

A common mistake in recent-file analysis is focusing on where the files live and what tools can parse them, then treating the output as a timeline. That approach feels productive because it produces lists quickly. It also hides the real question: what happened in the interaction layer that caused Windows or an application to remember this file?

For most environments, recent artefacts are created and updated by three triggers.

The first is deliberate user interaction through normal GUI flows. A user double-clicks a document in Explorer, selects it from an Open dialog, or clicks it from within an application. The application opens the file, Windows records it as recent in one or more places, and a Jump List entry may be updated for that application. This is the case most analysts have in mind, and sometimes it’s exactly what happened.

The second is interaction that’s still user-driven, but not “opening” in the ordinary sense. A user creates a new file through an application. They save a new file. They save a copy to a different location. They export data. Modern Windows versions have expanded what counts as a “recent” reference. In Windows 10 and later, file creation and some file operations can populate recent context in ways that older versions would not. In practical terms, that means you can see recent artefacts for documents a user produced or staged without ever opening them again after creation. It also means a copy or save workflow can leave recency footprints that resemble an “open” workflow.

The third is background application behaviour. Some applications re-open the last session. Some preload recent documents. Some scan recent locations for thumbnails, metadata, cloud sync status, or collaboration features. Some touch files because another component asked them to. These behaviours can update application-level context in ways that are visible in Jump Lists or related recency stores. The user may have started the application, but the specific file reference may not have been a deliberate choice in that moment.

These three triggers can produce artefacts that look similar on the surface. The job is to separate them by reasoning about the environment and corroborating the story.

Jump Lists as Application Memory, Not Application Proof

Jump Lists are often treated as “recently opened documents for a program”. That description is close enough for casual use but dangerous for investigation.

A Jump List is a per-application memory of useful items. It’s meant to help a user return to where they left off. In automatic Jump Lists, Windows maintains the list based on what the application references through shell-integrated mechanisms. In custom Jump Lists, the application curates the content, often including pinned items and app-defined categories.

From an evidentiary perspective, the critical detail is that Jump Lists are application-scoped. They tell you something about the relationship between an application identity and a file reference. That’s different from a shell artefact that only tells you a path was navigated.

When you see a document in a Microsoft Word Jump List, the simplest explanation is that Word opened or saved that document. That’s a reasonable starting hypothesis. It’s not the only explanation, and it’s not proof the user read the content, understood it, or acted on it.

A Word Jump List entry can exist because the file was opened via double-click. It can also exist because the file was created and saved, or because Word recovered it from autosave, or because the user clicked a template that created a new file with the same name pattern. It can also exist because the user opened a file briefly and closed it, or because Word opened it as part of a recovery prompt after a crash. The Jump List is recording that Word thinks this file is relevant recent context. It’s not recording attention or intent.

Automatic Jump Lists tend to carry more temporal meaning because they store last-reference times in a way that supports MRU ordering. That time is usually useful for building a timeline. It also has the MRU problem: the artefact prefers “most recent” over “complete history”. If a file is opened repeatedly, the Jump List won’t preserve a clean list of every open event; it’ll preserve a representation of recency.

Custom Jump Lists tend to carry different meaning. They’re often pinned items or curated sets. The presence of a file in a custom list is often evidence of explicit user pinning or application curation. It’s not necessarily evidence of recent use, and it might persist long after the file was last touched. Analysts sometimes treat pinned items as evidence of recent activity because they appear in the same place. That error is common and avoidable if you treat custom lists as a different class of artefact.

The broader reasoning skill here is to treat Jump Lists as an “application remembers this” signal. Then you ask what the application does that would cause it to remember.

Recent Items and RecentDocs: Why “Recent” Often Means “Referenced”

The user’s Recent Items shortcuts and the RecentDocs registry keys are sometimes treated as secondary to Jump Lists. In practice they can be equally useful, but they require the same restraint.

Recent Items shortcuts are often created through shell-level APIs that applications and the shell itself use to log “this file should be considered recent”. These shortcuts are convenient because they encode path and target metadata in a form DFIR tooling has supported for a long time.

The reasoning trap is assuming the shortcut means the user opened and viewed the file contents. In modern Windows versions, that’s not always what it means.

Windows 10 and later expanded what populates these recency stores. It’s common to see recent entries created as a side effect of creating a new file, saving a file to a location, or performing certain file operations. This matters in investigations where the question is did the user open that confidential document, because a recent entry might instead mean the user created or staged that document here.

RecentDocs MRU lists have their own nuance. They store file names in MRU order and update through registry writes. Analysts often use the key LastWrite time as a proxy for last accessed. That can work, but it’s easy to overstate. Registry structures can change for reasons other than a user opening a file. Deleting entries, clearing history, or system behaviour can shift LastWrite times in ways that don’t correspond to genuine file activity. If you treat the registry timestamp as an unquestionable ground truth, you’ll sometimes build a timeline that’s internally consistent and externally wrong.

A more defensible approach is to treat RecentDocs as a hint about what file names entered the “recent” ecosystem, and then use other artefacts to determine what kind of interaction is most likely. It’s evidence of reference and context. It’s not inherently evidence of viewing.

Why Analysts Over-Interpret These Artefacts

Over-interpretation isn’t usually laziness, it’s pattern hunger.

Recent lists look like user intent. They appear in user-facing UI. They’re organised in human-friendly order. They include file names that feel meaningful. In insider cases, they often include exactly the documents you care about. That alignment makes it tempting to treat them as direct proof.

The second driver is the way these artefacts compress interaction into a single line. “File X was recent in application Y” feels like a clean fact. The messy part is that the line is a summary produced by convenience logic, not a forensic log. It collapses “opened”, “created”, “saved”, “recovered”, “touched for metadata”, and “referenced through automation” into the same human-readable interface.

The third driver is that these artefacts often survive when other evidence is incomplete. If event logs are missing, if file system last access is disabled, or if a file has been deleted, Jump Lists and shortcuts can remain as some of the last remaining context. That can lead to a subtle escalation: analysts start leaning on them harder because they’re what’s available.

The corrective isn’t to dismiss them, it’s to slow down and ask the uncomfortable questions.

What action would create this entry in this environment?

What else should exist if that action occurred?

What else would exist if a different action occurred?

What do I not see, and does that absence matter?

Practical Reasoning: Building a Defensible Interpretation

A defensible interpretation starts with explicit hypotheses.

If you find a sensitive document in a Jump List for an application that normally handles that file type, the baseline hypothesis is the application opened or saved the file. Then you test it.

You look for supporting evidence that the application executed around that time. Prefetch can sometimes provide supporting context for application execution, but it should be used carefully, and only as a corroborative signal. If prefetch is absent, that doesn’t prove the application didn’t run. If it is present, it helps you anchor activity.

You look for file system artefacts that align with an open or save. For an open, you might expect transient files, updates to application caches, or updates to user-level MRUs inside the application itself. For a save or create, you might expect file creation times and, depending on the workflow, evidence of parent folder interaction.

You look for shell-level traces that fit the path story. If the file was opened through Explorer, you might see folder navigation signals that align with that access. If the file was opened through an application’s internal picker, you might still see shell interaction through the common file dialog. If you see no shell navigation context at all, that might suggest a direct path open, automation, or a non-Explorer mechanism.

You also look at what’s missing. Negative space matters here. If an analyst claims “the user opened the document”, but there’s no sign of the application being used in that window and no other corroborating artefacts, the claim is weak. It might still be true, but it’s not supported strongly enough to state it as a conclusion.

The same logic applies in reverse. If you find no recent artefacts for a document, don’t conclude it was never accessed. Recent artefacts can be disabled. They can be cleared. They can roll off due to list limits. Applications can bypass Windows recency mechanisms. The absence is informative, but it’s not definitive. The defensible phrasing is that there’s no observed application-level recency evidence in the available data, and then you explain what that does and does not imply.

Scenarios That Commonly Mislead Investigations

Document Staging and Exfiltration Preparation

In staging cases, the question is often framed as did the user open the confidential document. The user’s actual behaviour might be different. They might copy a set of files to a staging folder. They might rename them. They might compress them. They might transfer them to removable media. They might never open them on that system.

On Windows 10 and later, file creation and some file operations can populate recent context. That means you may see recent traces for files that were staged, created, or saved, without a corresponding open event that would imply viewing. An analyst who treats “recent” as “opened” can overstate the case.

The disciplined approach is to separate the activity categories.

Evidence of staging is evidence of handling. It might be stronger for a staging hypothesis than evidence of “opening” would be. It becomes even stronger when combined with other artefacts like removable storage usage, archive creation, or network evidence transfer. You don’t need to turn it into they opened and read it to make it meaningful. If you do, you risk an unsupported claim when challenged.

Insider Disputes About “I Never Looked at That File”

In HR and insider disputes, the stakes are high and the narratives are usually contested. A user might claim they never accessed a particular document. The organisation might point to a “recent files” list as proof.

Jump Lists and Recent Items can be strong evidence that the system associated that file with that user and that application context. They’re not proof the user read or understood the contents. In disputes, that distinction matters. It changes what you can responsibly say.

If a file appears in a Jump List for a user profile and the timeline aligns with other execution evidence for that application, you can often support a conclusion like “the document was opened or saved via this application under this user context”. That’s already significant. If you need to go further into intent or comprehension, you need more evidence, and sometimes you will not have it.

A common trap in these cases is to treat the presence of a file in a recent list as a proxy for deliberate selection. Many applications will re-open the last document on launch, or present recovery prompts that can load documents automatically. Ignoring those behaviours leads to misattribution.

Background Access, Previews, and Automation

Not all file references are intentional. That’s the core problem with interpreting recency.

Some applications create thumbnails and previews. Some index content. Some sync files and touch metadata. Some perform “recent files” bookkeeping that updates on program start. Some are driven by automation, either legitimate scripting or malicious tooling.

These behaviours can create clusters of recent references that don’t match human pacing. They can also create references at surprising times, such as on boot or on application launch, rather than when a user actively browsed to a file.

You don’t need to become an expert in every application’s internals, but you should recognise when a pattern suggests non-interactive behaviour. A dozen documents referenced within a second isn’t a user opening files one by one. A set of references that always occurs at login may be session restoration. A reference that occurs while the user wasn’t logged in could indicate a scheduled task or service-level process.

In these cases, the correct response isn’t to discard the artefact. It’s to narrow what it supports. It supports “the application context referenced this file”. The question becomes who or what drove that reference.

Contrasting with ShellBags

It can help to keep a simple mental model.

ShellBags tell you about folders that became part of a user’s navigational environment. They answer where did the user go, in a broad sense.

Recent files and Jump Lists tell you about files that became part of an application’s working context. They answer what did the application consider relevant to return to, which often correlates with opens and saves, but not always.

When both layers align, your confidence improves. If a user navigated to a folder on a USB drive and you see application Jump List entries for files on that drive in the same window, that’s consistent with deliberate interaction. If you see ShellBags for a folder but no application-level evidence of file interaction, that supports a weaker conclusion. It may still matter. It just shouldn’t be inflated.

When the layers diverge, it’s an opportunity to learn something about the workflow. A Jump List entry without corresponding shell navigation could suggest the file was accessed through a direct path, through an application’s internal picker, or through automation. Shell navigation without application evidence might suggest browsing without opening, or opening in a way that bypassed the expected application context.

That’s the point of layering. It turns artefacts into reasoning aids rather than verdicts.

How to Write Conclusions That Will Survive Scrutiny

The strongest DFIR writing is careful with verbs.

“Opened” is a strong verb. Use it when you can support it with multiple aligned artefacts and the application behaviour makes sense.

“Referenced” is often the honest verb for recent artefacts. It captures the idea that the file entered the application’s context without claiming it was read.

“Available” and “present” are useful when you’re describing what the system knew, not what the user did.

“Viewed,” “read,” and “understood” are almost never defensible from recency artefacts alone. Avoid them unless you have evidence that supports those states, and in endpoint forensics you often will not.

When you state a conclusion, it helps to explicitly anchor it to what Windows is doing. For example:

The user profile contains application-level recency artefacts indicating that Microsoft Word referenced the document at approximately this time.

That’s accurate and useful. It’s also difficult to undermine, because you’re not claiming more than the artefacts support.

If you need to discuss intent, treat it as a hypothesis that requires additional evidence. Intent belongs at the intersection of technical traces, behaviour patterns, and external context. Recent artefacts can contribute to that picture. They cannot carry it alone.

What Applications Remember is Not What Users Meant

Recent files and Jump Lists are a record of what Windows and applications remember as useful context. They’re a convenience layer, not a truth layer.

In many investigations, these artefacts will be some of the clearest available traces linking a user profile, an application, and a document path. That makes them valuable. It also makes them risky, because the temptation to turn “recent” into “opened and read” is strong.

The discipline is to treat these artefacts as evidence of reference and interaction, then to build a story through corroboration. When the wider artefact landscape supports deliberate use, you can say so. When it doesn’t, you should narrow your claim and explain the uncertainty. That’s not weakness. It’s defensibility.

If there’s one idea to carry forward from this post, it’s that application-level artefacts show you what applications remember. They don’t show you what a user understood, agreed with, or intended. In real investigations, that distinction is often the difference between sound analysis and an overreach you can’t support.

In the next post, we’ll move one layer closer to execution. Prefetch is often treated as a simple “it ran” artefact, and it can support that conclusion when the surrounding conditions hold. It also has limits, and those limits matter most in exactly the kinds of cases where recent-file context gets over-interpreted. The theme stays the same: understand what Windows is optimising for, then decide what the artefact can actually support.

References

- Windows 10 Jump List and Link File Artifacts - Saved, Copied and Moved - Larry Jones

- A forensic insight into Windows 10 Jump Lists - Bhupendra Singh; Upasna Singh

- Jump Lists - Digital Forensics Artifact knowledge base contributors

- SHAddToRecentDocs function (shlobj_core.h) - Microsoft

- Start menu policy settings - Windows - Microsoft

- Updates to the RecentDocs Key in Windows 10 - Lee Whitfield

- Jump List Analysis - Harlan Carvey

- Forensics Quickie: Pinpointing Recent File Activity - RecentDocs - 4n6k (pseudonymous; posted as “Anonymous”)

- How does the Windows File Explorer Quick Access recent items feature work? - Keith Miller (Super User)

- How does the Windows File Explorer Quick Access recent items feature work? - music2myear (Super User)

- Windows 10 and Jump Lists - topin89 (Forensic Focus forums)